KNF Onboarding Walkthrough (Work in Progress)¶

NOTE: this section uses pre-SOL006 descriptors which need to be converted before trying in Release 9+

Introduction¶

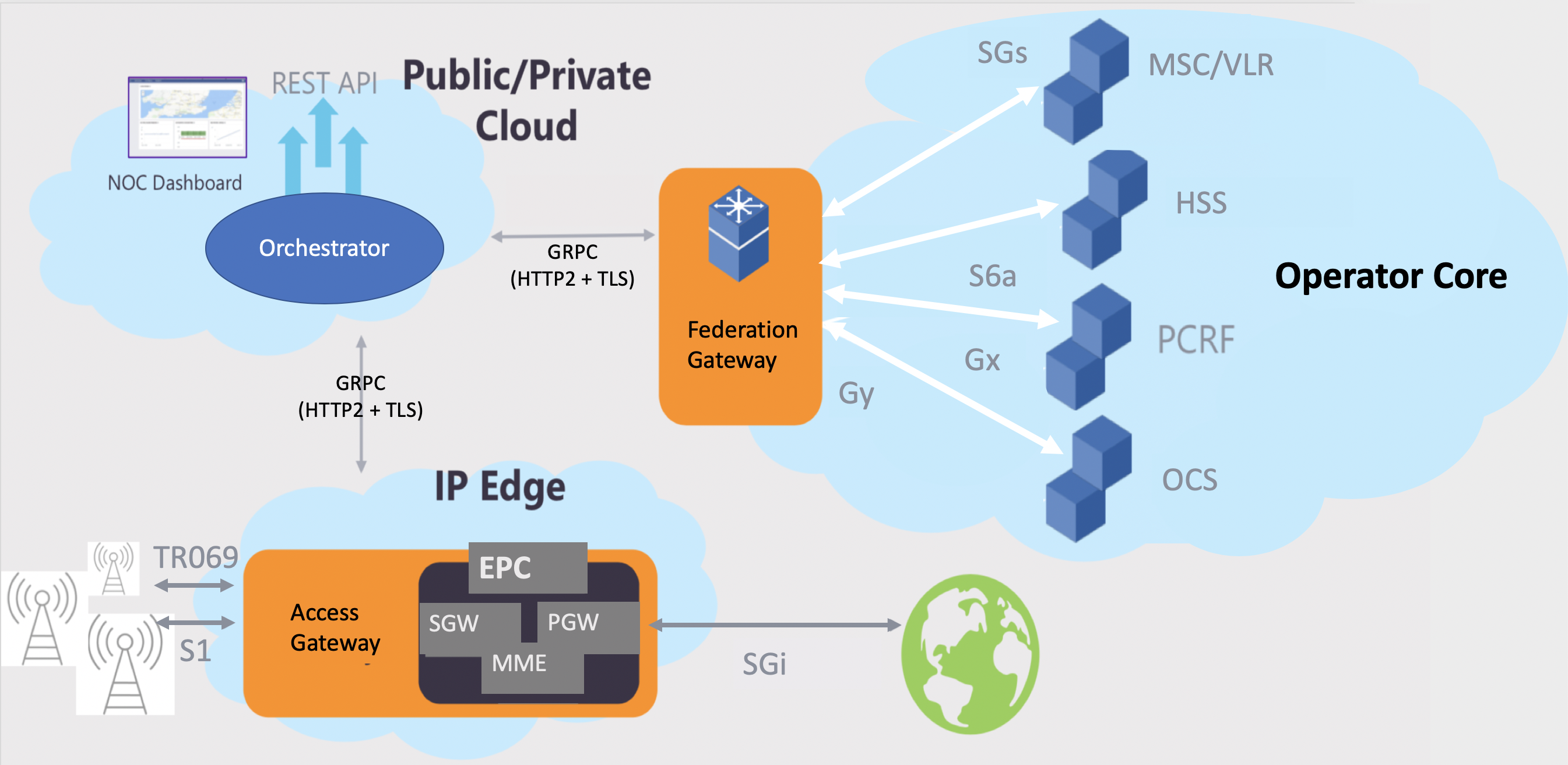

This section uses Facebook’s Magma, an open-source software platform that gives network operators an open, flexible and extendable mobile core network solution.

This example focuses on deploying the Magma Orchestrator component as a KNF, and then integrating it with a Magma AGW deployed as a VNF. It has been documented in a concise way while content keeps being added as K8s support is enhanced in OSM.

Final packages used throughout this example can be found here

KNF Requirements¶

A Helm Chart or Juju Bundle contains already all the characteristics of a Kubernetes Network Function in OSM. It just needs OSM to be connected to a Kubernetes Cluster to run on top.

The Helm Chart in which this KNF is based, has been contributed for OSM here

Building the KNF Descriptor¶

With the Helm Chart available, the following KNFD can be built. Put the following contents in a YAML file using the following structure:

fb_magma_knf

|

--- fb_magma_knfd.yaml

The descriptor contents would be:

vnfd-catalog:

schema-version: '3.0'

vnfd:

- connection-point:

- name: mgmt

description: KNF with KDU using a helm-chart for Facebook magma orc8r

id: fb_magma_knf

k8s-cluster:

nets:

- external-connection-point-ref: mgmt

id: mgmtnet

kdu:

- helm-chart: magma/orc8r

name: orc8r

mgmt-interface:

cp: mgmt

name: fb_magma_knf

short-name: fb_magma_knf

version: '1.0'

The main modelling is under the “kdu” list. The rest of elements are either descriptive or not connected to a specific feature at this point.

Package your VNF:

tar -cvzf fb_magma_knf.tar.gz fb_magma_knf/

Launching the KNF Package¶

To launch the KNF package, you need to first add the repository that contains the Helm chart.

osm repo-add --type helm-chart --description "Repository for Facebook Magma helm Chart" magma https://felipevicens.github.io/fb-magma-helm-chart/

Now, include the KNF into a NS Package, let’s create one through a YAML file under the following structure.

fb_magma_ns

|

--- fb_magma_nsd.yaml

The descriptor contents would be:

nsd-catalog:

nsd:

- constituent-vnfd:

- member-vnf-index: orc8r

vnfd-id-ref: fb_magma_knf

description: NS consisting of a KNF fb_magma_knf connected to mgmt network

id: fb_magma_ns

name: fb_magma_ns

short-name: fb_magma_ns

version: '1.0'

vld:

- id: mgmtnet

mgmt-network: true

name: mgmtnet

type: ELAN

vim-network-name: mgmt

vnfd-connection-point-ref:

- member-vnf-index-ref: orc8r

vnfd-connection-point-ref: mgmt

vnfd-id-ref: fb_magma_knf

The main modelling is under the “constituent-vnfd” list. The rest of elements are either descriptive or not connected to a specific feature at this point.

Package your NS:

tar -cvzf fb_magma_ns.tar.gz fb_magma_ns/

With both packages ready, upload them to OSM:

osm nfpkg-create fb_magma_knf.tar.gz

osm nspkg-create fb_magma_ns.tar.gz

And finally the package can be tested over any given K8s Cluster registed to OSM. For details on how to register a K8s Cluster, visit the user guide

osm ns-create --ns_name magma_orc8r --nsd_name fb_magma_ns --vim_account <vim_name>

Please note that this KNF will need three to four external IPs, which should be available by having previously integrated MetalLB or similar mechanism to your K8 Cluster. If these IPs are not available, the deployment will fail.

Testing the KNF Package¶

Monitor with osm ns-list until instantiation is finished, then, execute the following command to check the status of your KDU

osm vnf-show <vnf-id> --kdu orc8r

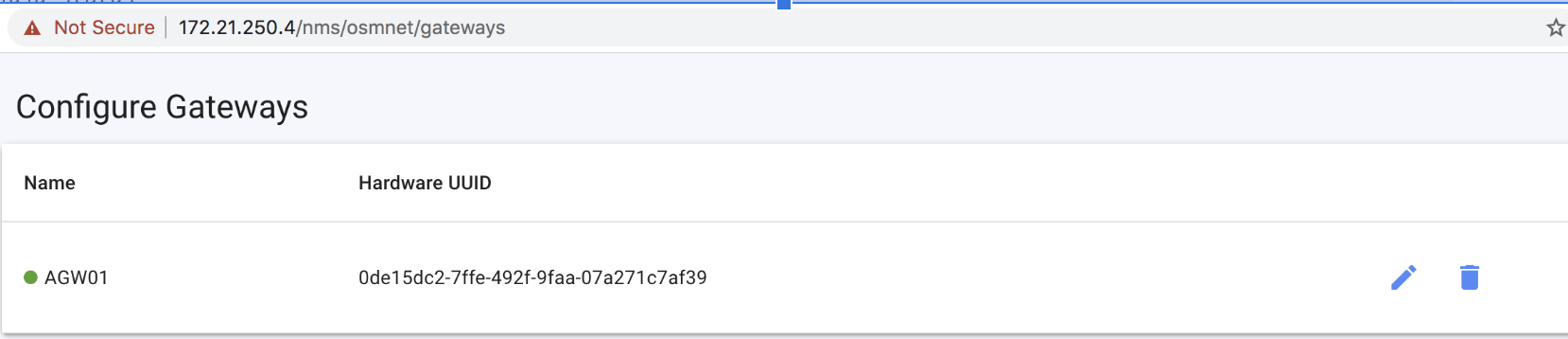

Under the services list, you will find all the deployed components and status. Look for your “load balancer” IP addresses for the nginx-proxy component (172.21.250.4 in the example below), as it will be used to access this KNF’s dashboard.

==> v1/Service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

bootstrapper-orc8r-proxy LoadBalancer 10.233.44.125 172.21.250.5 443:30586/TCP 58s

...

nginx-proxy LoadBalancer 10.233.4.76 172.21.250.4 443:31742/TCP 58s

orc8r-clientcert-legacy LoadBalancer 10.233.50.131 172.21.250.6 443:30688/TCP 58s

orc8r-configmanager ClusterIP 10.233.19.191 <none> 9100/TCP,9101/TCP 58s

orc8r-controller ClusterIP 10.233.22.92 <none> 8080/

...

An extra step when your KNF is ready, is to run this command that creates a user for the Magma Orc8r NMS to connect to the Magma Orc8r Controller. This one has to be executed manually while Day-1/2 primitives are not supported in KNFs:

kubectl exec -it -n YOUR_NAMESPACE $(kubectl get pod -n YOUR_NAMESPACE -l app.kubernetes.io/component=controller -o jsonpath="{.items[0].metadata.name}") -- /var/opt/magma/bin/accessc add-existing -admin -cert /var/opt/magma/certs/admin_operator.pem admin_operator

Don’t forget to replace YOUR_NAMESPACE with the namespace where Magma Orc8r was deployed.

Visit the dashboard with HTTPS and access it with user admin@magma.test (password: password1234)

Testing functionality¶

We have prepared a modified Magma AGW, which is the distributed Packet Core component which runs in a single VM, in order to test it together with its Orchestrator (Orc8r KNF)

You can download the image from here. Please “gunzip” it before uploading it to your VIM.

Note: this is a v1.0.1 image built following Magma AGW installation instructions, but incorporates extra tools prepared by us, that can communicate with the Orc8r API for self-registering the AGW. These scripts are located in /home/magma, and have been built to simplify the Day-1 primitives.

Steps for testing are:

a) Download the Magma AGW 1.0.1 VNF Packages and upload them to OSM

wget https://osm-download.etsi.org/ftp/Packages/vnf-onboarding-tf/magma-agw_vnfd.tar.gz

wget https://osm-download.etsi.org/ftp/Packages/vnf-onboarding-tf/magma-agw_nsd.tar.gz

osm nfpkg-create magma-agw_vnfd.tar.gz

osm nspkg-create magma-agw_nsd.tar.gz

You may need to change the vim-network-name corresponding to the management network, from osm-ext to a network that matches your environment.

b) Prepare a ‘params.yaml’ file with instantiation-time parameters to self register the AGW to your Orchestrator. Populate it with your preferred values, except for the Orc8r IP address, which should match the orc8r_proxy exposed service.

additionalParamsForVnf:

- member-vnf-index: '1'

additionalParams:

agw_id: 'agw_01'

agw_name: 'AGW01'

orch_ip: '172.21.250.10' ## change this to the MetalLB IP address of your orc8r_proxy service.

orch_net: 'osmnet'

c) Launch the AGW.

osm ns-create --ns_name agw01 --nsd_name magma-agw_nsd --vim_account <vim_account> --config_file params.yaml

d) Monitor the OSM proxy charms, they take care of auto-registering the AGW with the Orchestrator.

juju switch <ns_id>

juju status

As mentioned before, the charms run some scripts included in this image, which in this particular example, will: (1) Reset the AGW hardware ID, so that you can deploy multiple AGWs with the same image, (2) Configure a “LTE Network” in the Orc8r (if it exists because you want more than one AGW in the same network, it won’t do anything), (3) Register itself at the Orc8r, (4) Add testing domains of the Orc8r to /etc/hosts so that the AGW can reach it by name, (5) Restart the Magma services, so the AGW tries to activate its registration.

e) When finished, the Magma Orchestrator dashboard will show the registered AGW01. You are ready to integrate some eNodeBs! (emulators to be provided soon!)